Fourth Robotics and ROS in Zurich Meetup

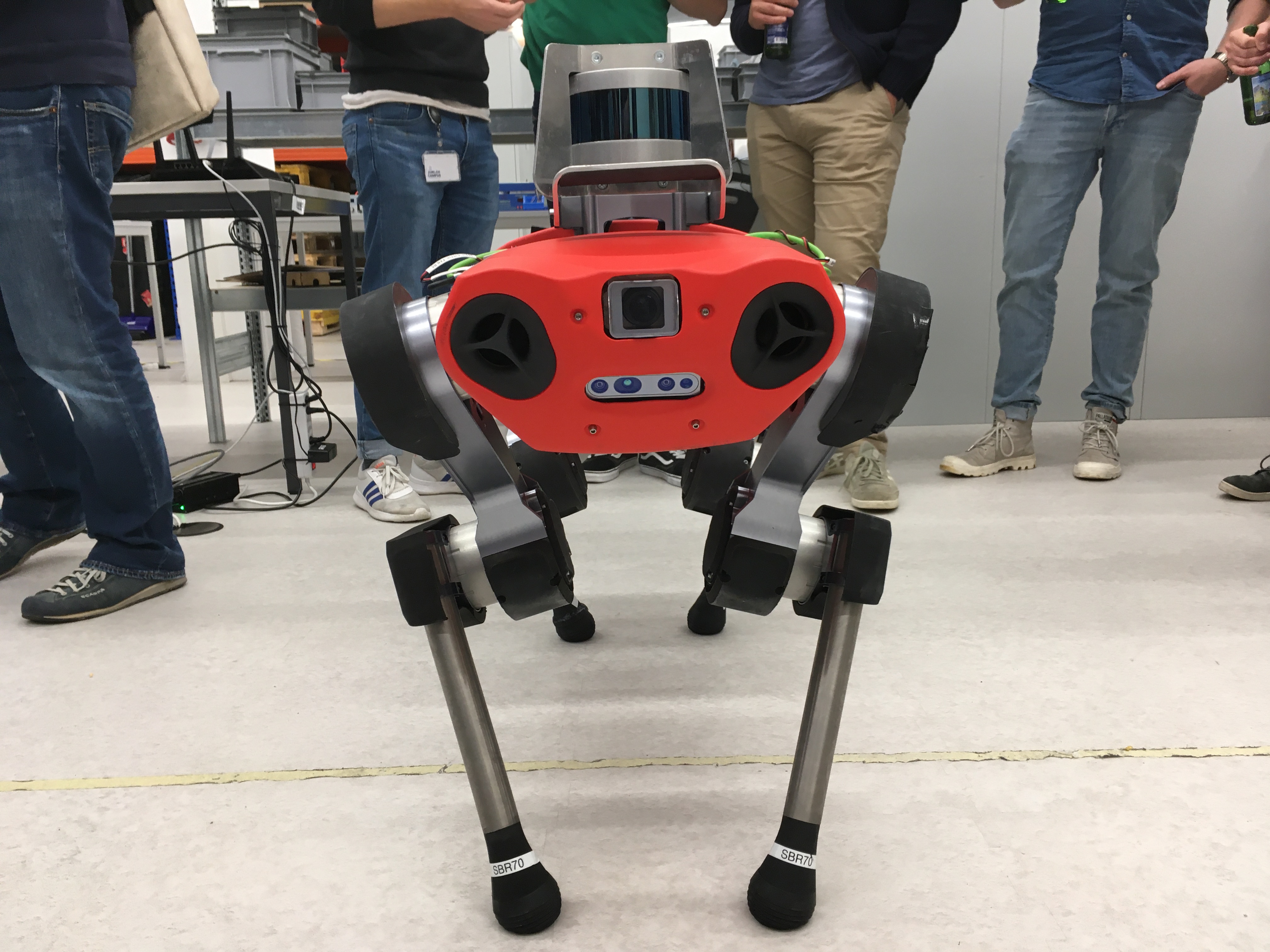

On Wednesday’s evening, 16th of October we attended a 4th Robotics and ROS in Zurich meetup. I was our first meetup after the summer break. This time, it was co-organized by ICCLab and ANYbotics. ANYbotics provided their office space for the meeting and demo. We managed to gather together around 50 interested attendees. Everything started […]