In his excellent article in Linux Technical Review #04 Jens-Christoph Brendel proposes a new way how to implement High Availability (HA) in current IT architectures. According to Bendel, modern IT architectures continually gain in complexity. This fact makes it difficult to guarantee availability on a certain level. Nevertheless High Availability is not merely a competitional advantage: for many companies keeping availability levels above 99,999 % per year is a matter of existence. Therefore a few systematic steps should help in planning and implementing high availability in your IT environment. This article shows a possible strategy on how to plan High Availability in the Mobile Cloud environment.

Redundancy vs. Complexity

According to Brendel, every HA-strategy starts with an evaluation of necessary degrees of availability each architecture component requires. Basically availability can be increased by adding redundant components (as mentioned in my former article). On the other hand, every new component makes the overall system more complex and increases the risk of component failures. In short: there is always a trade off between avoiding system component outages and adding complexity (and possible points of failure) to the overall architecture by adding redundant components to an IT architecture. For the OpenStack environment this means one has to classify the different OpenStack components according to the availability an OpenStack user requires.

AEC-classification proposal for OpenStack

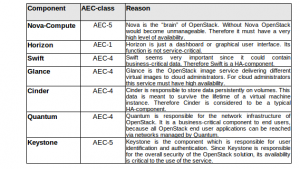

One possible classification for IT components is the AEC-classification developed by the Harvard Research Group. The AEC-classes reach from AEC-0 (non-critical systems, typically 90% availability) to AEC-5 (disaster-tolerant systems, 99.99999% or “Five-Nines” availability). OpenStack basically consists in the following components: Nova (including Nova-Compute, Nova-Volume and Nova-Network), Horizon, Swift (ObjectStore), Glance, Cinder, Quantum and Keystone. A typical OpenStack end user has to deal with these components in order to be able to handle his cloud installation. One has to think about the targeted availability levels of these components in order to know more about the overall stability of the OpenStack cloud environment. Some components need not be AEC-5, but for others AEC-5 is a must. The following table is a proposal of AEC-classes for each of the OpenStack components.

Of course the real availability architecture of a productive OpenStack implementation also depends on how many OpenStack nodes are used and on the underlying virtual and even physical infrastructure, but this proposal serves as a good starting point to think about adequate levels of availability in productive OpenStack architectures. How do we secure critical components like Nova or Keystone against failures? Any OpenStack HA strategy must focus on this question first.

Risk Management and the “Chaos Monkey”

The next steps towards developing an OpenStack HA strategy are risk identification and risk management. It is obvious that the risk of a component failure depends on the underlying physical and virtual infrastructure of the current OpenStack implementation and also on the requirements of the end users, but to investigate risk probabilities and impacts, we must have a test on what happens to the OpenStack cloud if some components fail. One such test is the “Chaos Monkey” test developed by Netflix. A “Chaos Monkey” is a service which identifies groups of systems in an IT architecture environment and randomly terminates some of the systems. The random termination of some components serves as a test on what happens if some systems in a complex IT environment randomly fail. The risk of component failures in an OpenStack implementation could be tested by using such Chaos Monkey services. By running multiple tests on multiple OpenStack configurations one can easily learn if the current architecture is able to reach the required availability level or not.

Further toughts

Should OpenStack increase in terms of availability and redundancy? According to TechTarget, the OpenStack Grizzly release should become more scalable and reliable than former releases. A Chaos Monkey test could reveal if the decentralization of components like Keystone or Cinder can lead to enhanced availability levels.

1 Kommentar