In the world of containerized architectures, there are different and new container deployment and orchestration tools which help turning monolithic applications into running composite microservices. Some of them are intended to be used in a development environment like Docker-Compose or in a production environment like Kubernetes, Docker-Swarm or Marathon. Also, we can observe some tools executing atop other container schedulers, like Rancher or Vamp. In this blog post, we take a look at the latter while at the same time we continue to inspect the alternatives in order to compare all solutions eventually.

Vamp is an open source microservice platform that in this competitive new environment is focused on making canary releases possible and easy, and enable autoscaling among others advantages. The current version of Vamp is 0.9.1 with quite active changes in its Git repository.

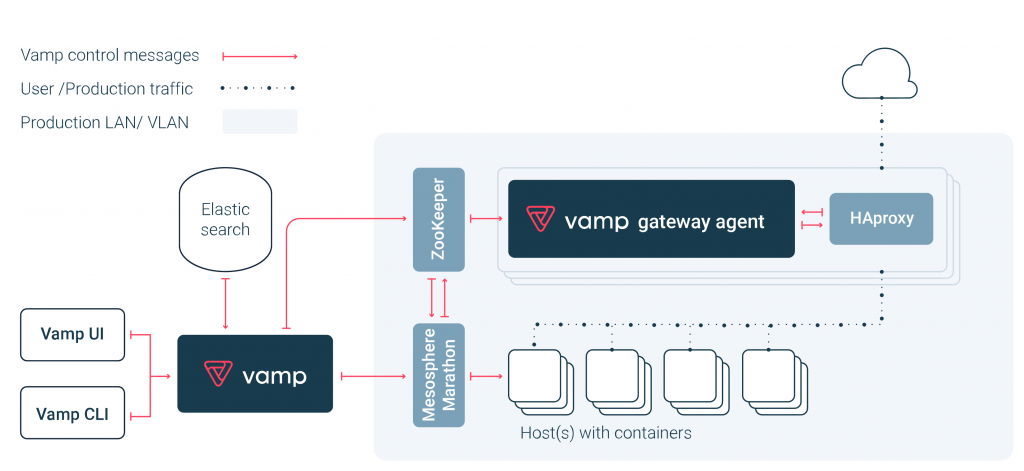

In the previous image (from the Vamp documentation), we can see the topology of Vamp. The requirements that Vamp needs for its work are:

In the previous image (from the Vamp documentation), we can see the topology of Vamp. The requirements that Vamp needs for its work are:

- Container scheduler (one of: marathon, dc/os, kubernetes, rancher, docker swarm)

- Key value store (one of: etcd, consul, zookeeper)

- Load balancer (haproxy)

- Search engine (elasticsearch)

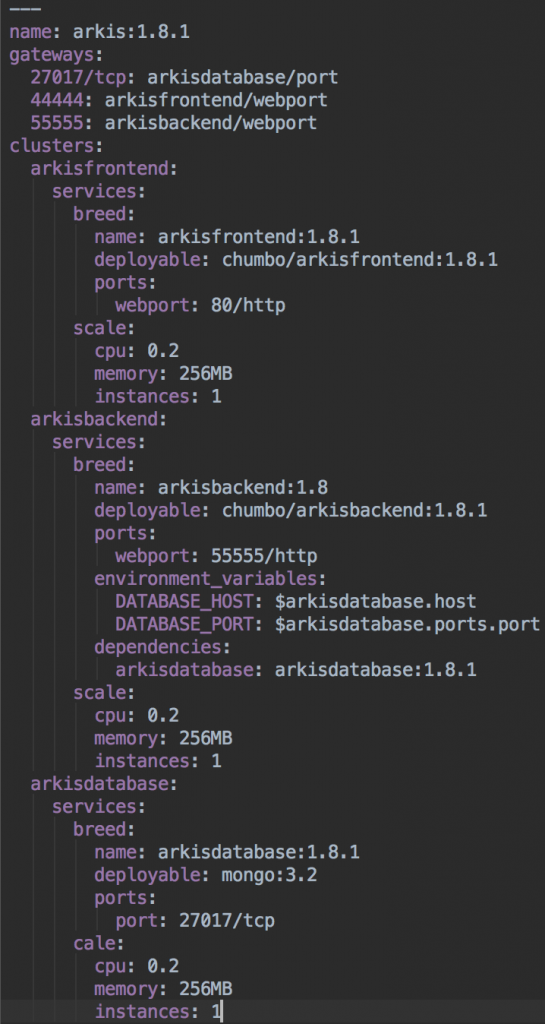

To create a blueprint for describing the (micro)services, connections and dependencies between them, gateways, environment variables or the properties for scaling these services, Vamp provides its own domain-specific language (DSL) in a YAML format.

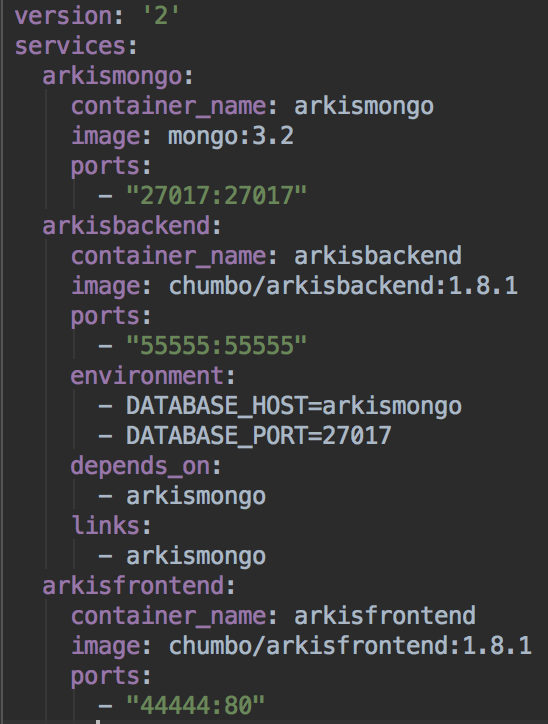

We demonstrate how to deploy a simple three-tier architecture that we are running in a development environment with docker-compose into a production environment using Vamp. The application is a prototype mimicking the functionality of an actual industry use case, a document management application, in the context of our research on cloud-native applications. All containers of the prototype can be found in the public Docker Hub for reproducing our results. Interestingly, there is no community site yet for sharing blueprints or other composition artefacts to speed up deploying more complex application parts spanning multiple containers; we expect such a facility to become available soon nevertheless.

The first step was to install Vamp following the tutorial using Rancher in Vamp.io. Subsequently we could find the graphical dashboard of Vamp running on port 9090, and several backend systems on other ports. The blueprints that describe our architecture are as follows:

The first step was to install Vamp following the tutorial using Rancher in Vamp.io. Subsequently we could find the graphical dashboard of Vamp running on port 9090, and several backend systems on other ports. The blueprints that describe our architecture are as follows:

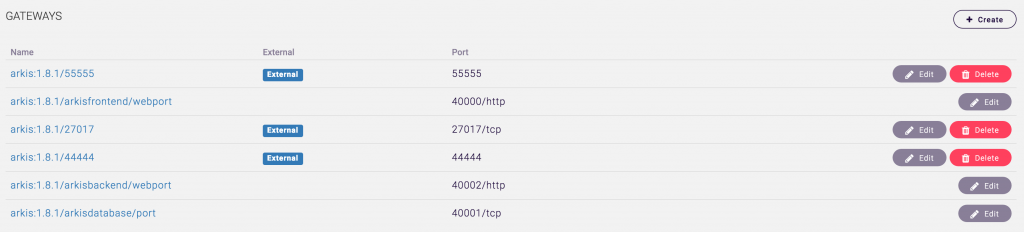

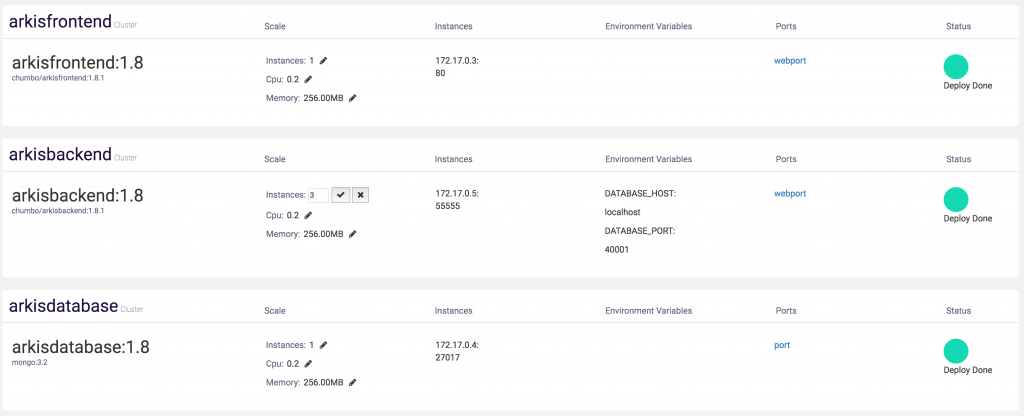

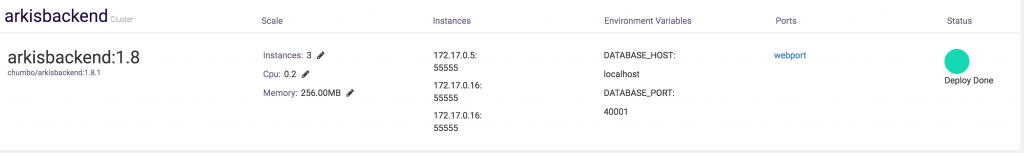

In both images we can see how to translate the docker-compose DSL to the Vamp DSL. After deploying the Vamp blueprint using the dashboard, we can find our gateways and deployments.

Using the two main advantages that Vamp provides is very easy. For example we can scale the number of instances by changing a simple number.

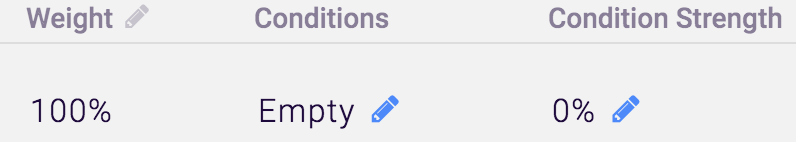

Or, we can do a canary release by just changing the weight or the conditions of the cluster and hence routing requests to only old, only new or mixed deployments of our microservices.

Among the limitations that we can find using Vamp are that one must learn a new DSL and its dependencies. For example, we can’t use some of the features or advantages that usually reside in the orchestration scheduler (Kubernetes, Marathon, Rancher, …) or we can’t update easily to the new version of this tool. Some of those disadvantages are however an industry-wide problem (i.e. lack of standardisation for microservice compositions, even though individual transcoders exist). Among the advantages of using Vamp is that the team behind it is approachable and reacts quickly; for instance, we identified a mistake in one of the tutorials and it was corrected soon after.

Our future work encompasses the evaluation of a more fine-grained application structure with more microservices, the evaluation of more container management tools, and an extended analysis of stateful and stateless microservices.

tnx for the great post on Vamp!

Some additional comments to clarify a few things:

– “we can’t use some of the features or advantages that usually reside in the orchestration scheduler (Kubernetes, Marathon, Rancher, …)”

For this Vamp has dialects, we currently support Marathon and Docker dialects: http://vamp.io/documentation/using-vamp/blueprints/#dialects and dialect support for K8s and Rancher is coming up.

– “one must learn a new DSL and its dependencies”:

We are improving this fair point and are working on a conversion tool from common configurations like docker-compose

– “we can’t update easily to the new version of this tool”

We will be releasing installation and upgrade tools in the near future to make this a much smoother experience.

Thanks again, and if readers of this blog have any remarks, questions or suggestions feel free to reach me at olaf@magnetic.io or on our Gitter channel: https://gitter.im/magneticio/vamp

Thank you for your clarifications. We see the current series of evaluations as a first step and will re-evaluate all systems as they progress. We acknowledge that our blog posts may contain some factual inaccuracies which by incorporating this feedback will hopefully be resolved in future, more structured, publications about this topic.